#Introduction

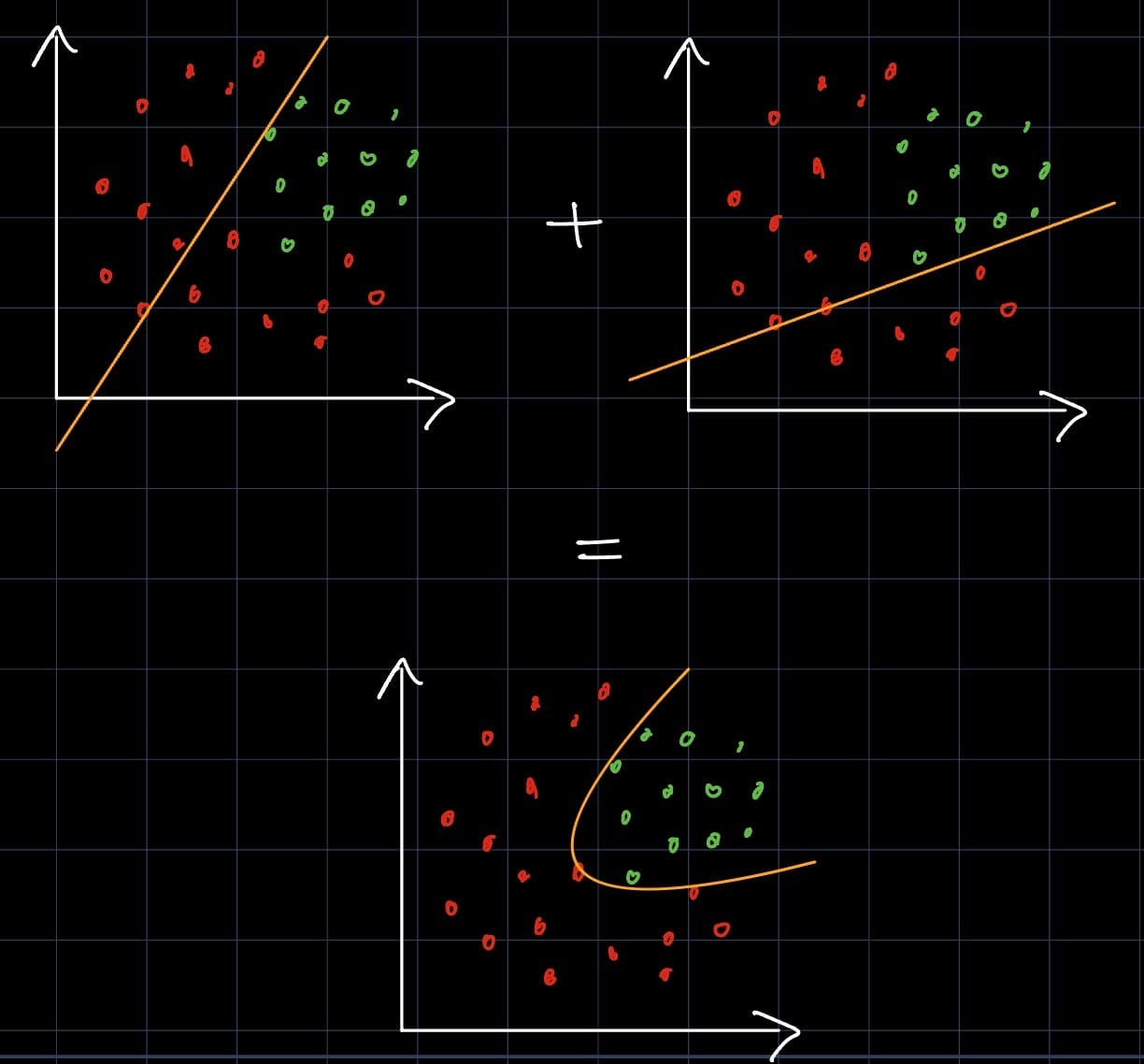

#From Linear to Nonlinear

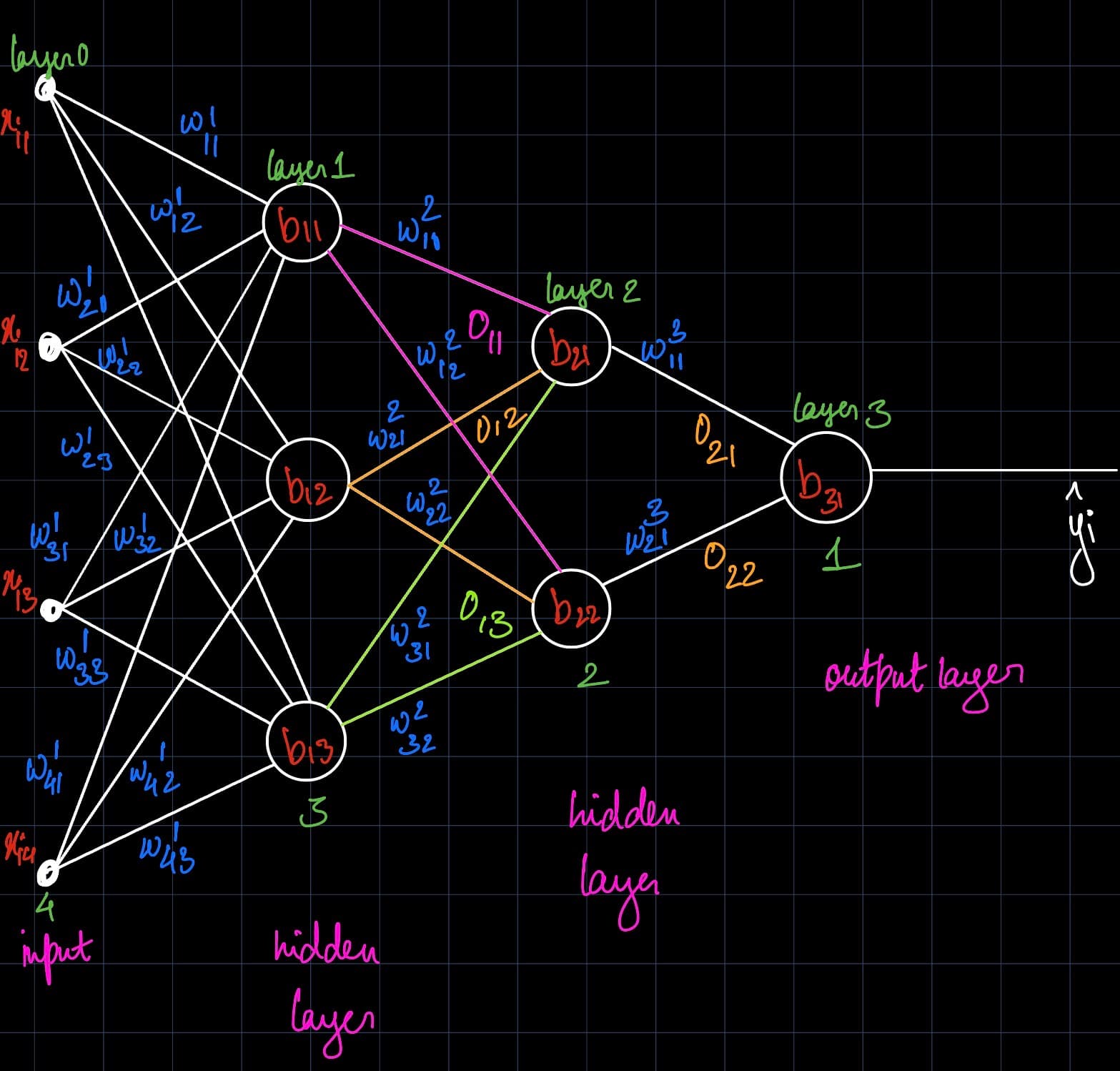

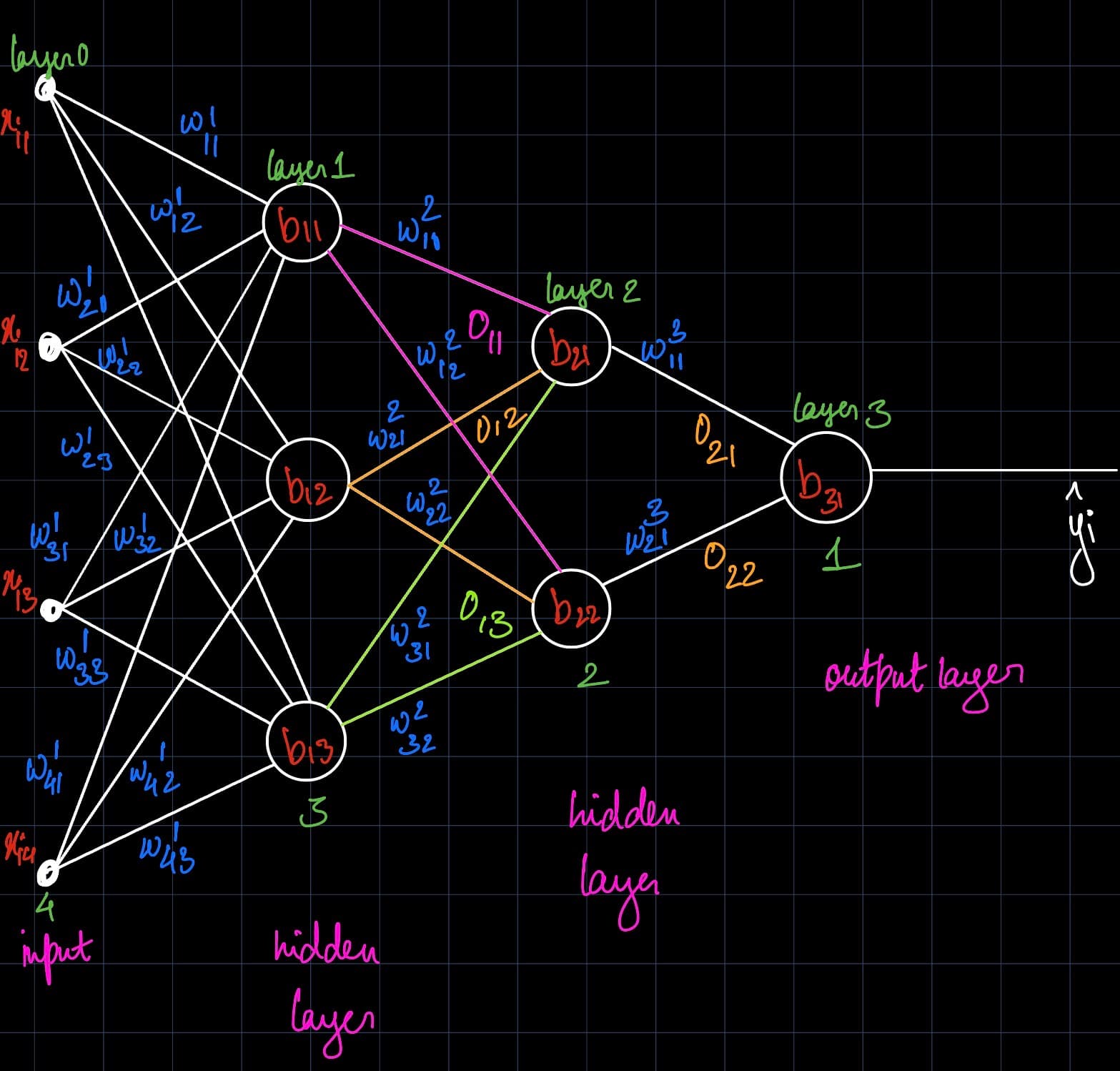

#Understanding Neural Network Architecture

Neural Network Architecture with Notation

#How to Improve Model Accuracy

#Try It Yourself

A

Ayushi Sahu

AI Engineer

Was this helpful?

In order to understand how to train an LLM, we first need to understand backpropagation. And before we get there, we need to understand the stage where everything begins: forward propagation.

From linear decision boundaries to nonlinear curves using multi-layer perceptrons

Neural Network Architecture with Notation

Ayushi Sahu

AI Engineer